Jenna DeLuca felt confused and frustrated. It was spring 2025, and this science fair judge had just DQ’d — disqualified — another student project. It was for the same reason as several others. The teen’s project listed questionable research papers among its sources. For some, DeLuca says, “the title would be correct, the journal would be right, but the authors were wrong.” In other cases, she notes, the journal “didn’t even exist.” It was totally bogus.

She realized these fake citations had almost certainly come from a chatbot such as ChatGPT or Gemini. When AI-powered chatbots respond to questions, they sometimes provide made-up answers. These are known as hallucinations.

Any student project with fake citations is a big problem. But these projects were among the most important that the students had ever worked on. They were in the 2025 Regeneron International Science and Engineering Fair (ISEF) finals.

ISEF is so selective that it’s often called the Olympics of science fairs. To qualify, students must first win in a series of smaller, regional science fairs. Only some 1,800 young researchers from around the globe earn a spot each year.

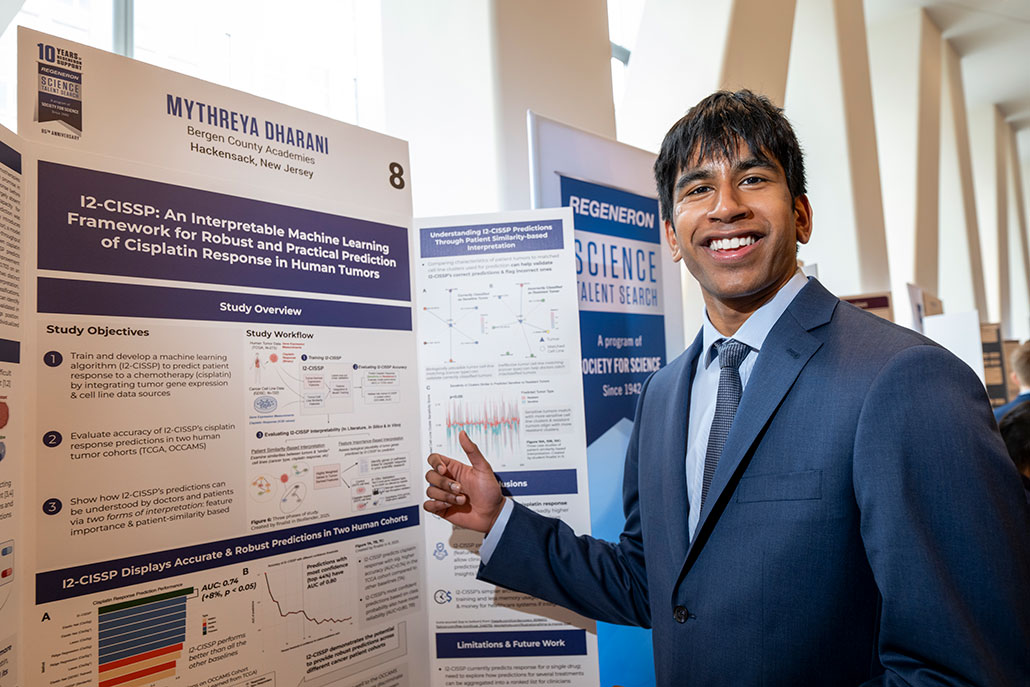

The competition is “inspiring and very motivating,” says Mythreya Dharani. This high school senior from Hackensack, N.J., competed at ISEF in 2025. “You see not only projects within your own category,” he says, but also “in vastly different fields.”

Mythreya Dharani recently participated in two high-level science competitions run by Society for Science. Citations are “not the most exciting part of research,” he admits, but finding them is “still something that you should probably do by yourself.” It’s not worth taking the risk that AI will suggest one that doesn’t exist. Chris Ayers Photography/Licensed by Society for Science

Mythreya Dharani recently participated in two high-level science competitions run by Society for Science. Citations are “not the most exciting part of research,” he admits, but finding them is “still something that you should probably do by yourself.” It’s not worth taking the risk that AI will suggest one that doesn’t exist. Chris Ayers Photography/Licensed by Society for Science

ISEF exists to encourage students, DeLuca notes. Its judges and mentors love seeing talented teens, such as Dharani, succeed in research. Some of his competitors, unfortunately, tripped up over the sources they had cited.

Having to DQ their projects “was frustrating and also kind of heartbreaking,” says DeLuca. She’s the scientific integrity officer at Society for Science in Washington, D.C. The Society runs ISEF (and publishes Science News Explores).

But teens aren’t the only ones seriously affected by AI-hallucinated citations. Increasingly, fake citations are also finding their way into papers published by professional scientists. Some of these scientists may even find they’ve broken federal law (see box below).

After working hard on a research project, science-fair contestants don’t want to be sent home on a technicality — such as a citation mistake due to AI use. That’s why judges warn students to use AI with caution and check over every part of their research carefully. onuma Inthapong/iStock/Getty Images Plus

After working hard on a research project, science-fair contestants don’t want to be sent home on a technicality — such as a citation mistake due to AI use. That’s why judges warn students to use AI with caution and check over every part of their research carefully. onuma Inthapong/iStock/Getty Images Plus

Putting chatbots to the test

ISEF keeps its judging process confidential. So DeLuca can’t say anything about the student projects that got DQ’d for fake citations, or even how many there were. But “there were a decent amount,” she says. Enough to raise red flags for her and the other judges.

According to ISEF rules: “Artificial intelligence may be used as a project resource.” But it must be cited and properly acknowledged. And, of course, “All materials presented must be in the researcher’s own words.”

DeLuca wondered how fake citations wound up in these students’ projects. They could have made them up. More likely, they asked AI for references without realizing it might hallucinate. And then they used the references without following up and fact-checking them.

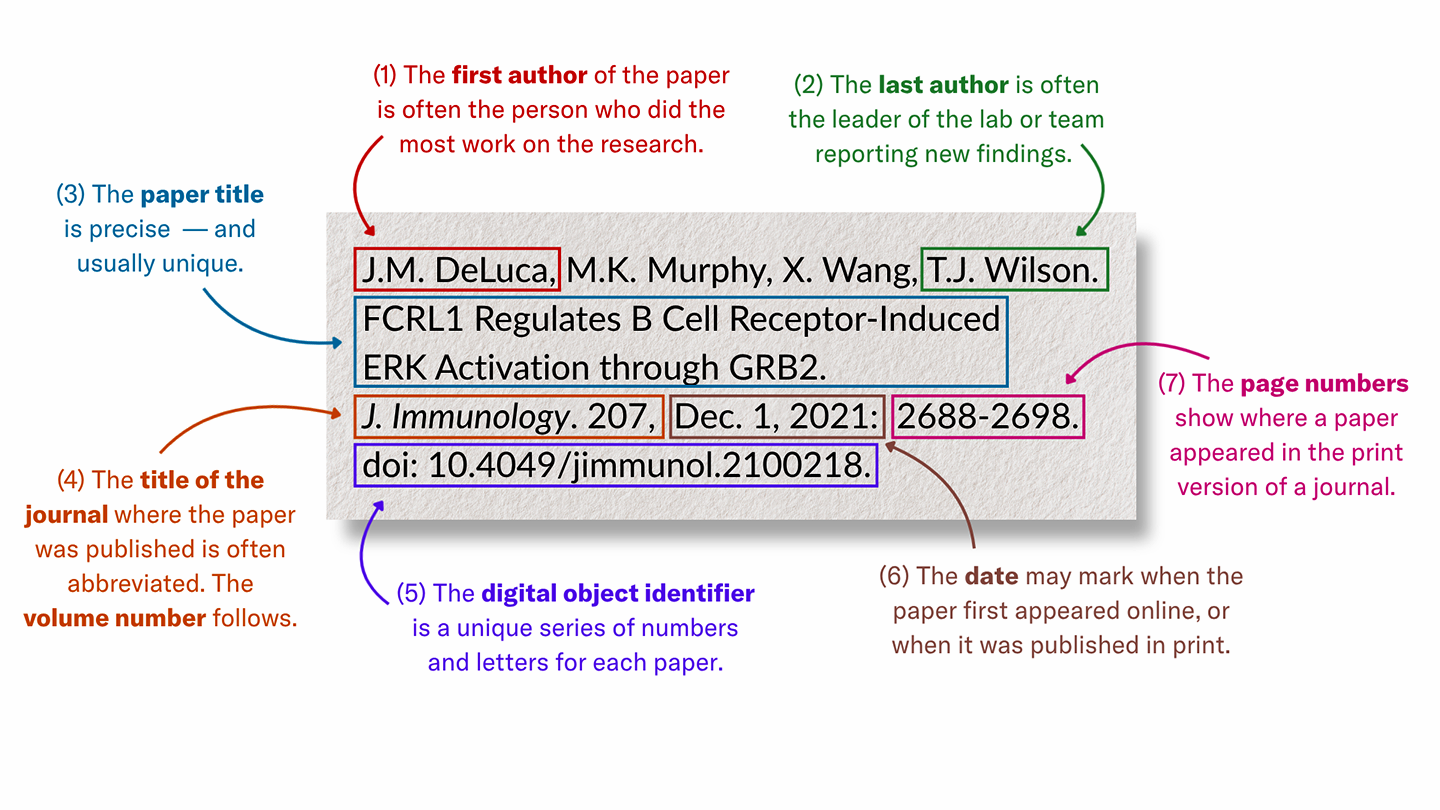

But these weren’t the only possibilities. DeLuca wondered if some students might have used a chatbot to simply format their citations. She’s referring to the process of putting the author, title and other information about a source into some specified order.

Explainer: What is generative AI?

To test that last idea, DeLuca did an experiment. She asked a chatbot to format a list of real, but unformatted, references. “It still hallucinated fake information,” she says. The chatbot introduced bogus details into what had been a list of real citations.

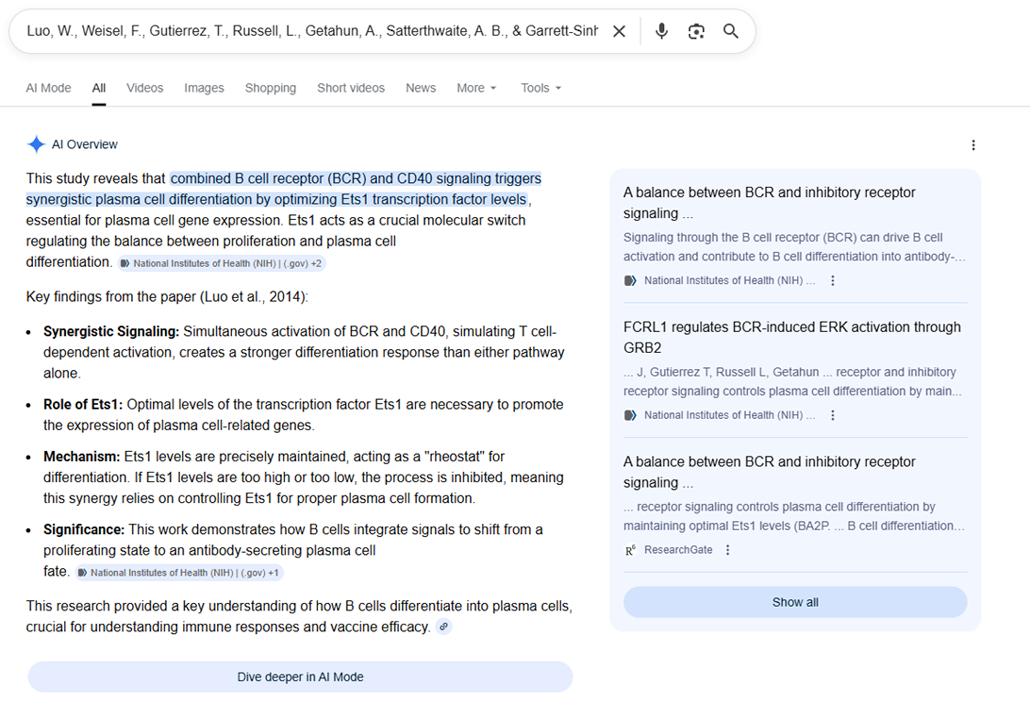

Next, she wanted to see what happened if students tried to verify their citations. Here, she merged information from two real citations to make a fake one. Then she Googled the mashup citation.

An AI summary popped up at the very top of the results. “It specifically said, ‘this is a real article,’” says DeLuca. But she knew it wasn’t.

Here, Google’s AI overview describes a source as if it is real and correct. But it is not. In fact, Jenna DeLuca crafted a fake citation by merging information from two real papers. The AI overview misses this problem entirely and responds as if the source is correct. Jenna DeLuca

Here, Google’s AI overview describes a source as if it is real and correct. But it is not. In fact, Jenna DeLuca crafted a fake citation by merging information from two real papers. The AI overview misses this problem entirely and responds as if the source is correct. Jenna DeLuca

DeLuca’s heart sank. Even if students had been careful to check their citations, they could have been misled. They would have had to scroll way past the AI summary to find the mistake.

Dharani isn’t surprised kids might use AI here. “It can admittedly be boring to write citations,” he says. But, he adds, that doesn’t mean students should take shortcuts.

Citations are an essential part of the research process, Dharani notes. New science builds off past ideas. This process only works if past ideas are “reported accurately,” he explains. Crediting other’s ideas “and also acknowledging how your own ideas draw inspiration from others is really important.”

Some students don’t find out their science fair project is going to be disqualified until they reach the competition floor. These days, AI-hallucinated citations could send a participant home before their research even gets judged.demaerre/iStock/Getty Images Plus

Some students don’t find out their science fair project is going to be disqualified until they reach the competition floor. These days, AI-hallucinated citations could send a participant home before their research even gets judged.demaerre/iStock/Getty Images Plus

Ghost references

Students don’t even have to use AI to accidentally include fake citations.

How? A working scientist might add a hallucinated citation into a paper they’re writing. If no one discovers it during the paper’s review, that citation will get published. Now, students and other researchers will assume this citation is real.

That’s been happening more and more, two new studies find.

In the first, researchers analyzed nearly 18,000 papers. All had been presented at six computer-science conferences in 2024 and 2025. At least 300 papers had one or more hallucinated citations. The highest number of them came from the most recent meeting.

The second analysis took a look at 4,000 randomly selected papers, book chapters and conference proceedings. All had come from five leading scientific publishers last year. At least 65 had a fake citation, two analysts reported in Nature on April 1. Based on this rate, they say, it’s possible that tens of thousands of the scientific works published in 2025 also contain hallucinated citations.

People are calling these ghost references, says Ben Williamson. He’s a digital-education expert at the University of Edinburgh in Scotland.

“These are pieces of work that are not alive,” he explains. “They’re haunting us — sort of shambling on in their undead form.”

Williamson edits the research journal Learning, Media and Technology. He’s caught many fake citations in papers submitted to this journal. Late last year, he found an especially surprising one. This bogus cite claimed he was its author.

Williamson looked up the paper he had supposedly written and found several versions. All were titled “Education governance and datafication.” His listed co-authors differed, as did the dates. The citations also linked to different journals. One version had been cited in 42 other papers. (That number has since climbed to at least 77.) Keep in mind, Williamson stresses, this is a ghost paper — “something I did not write, that does not exist.”

Most of the papers that cite this ghost reference, he says, are poor quality. And most are AI-generated, he suspects. However, at least one citation to the bogus paper had made it into a journal with a good reputation.

The importance of staying curious

To avoid problems such as ghost references, some people steer clear of AI. Jennifer Borgioli Binis is one of them. “I don’t use any AI of any type,” she says. Based in Buffalo, N.Y., she’s an expert in the history of education (and moderator on the subreddit AskHistorians).

Chatbots use what’s known as generative AI. For her, the costs of this type of AI — “to the environment, to art, to people” — outweigh any benefit it offers.

Williamson, too, is cautious. “AI is dangerous at all levels of learning,” he believes — but especially to younger students. Why? Getting an easy answer stops us from learning how to dig deeper and ask harder questions.

Binis agrees that everyone should value curiosity over quick, easy answers. “I try to keep advocating for us to be more curious about the information we find online,” she says. When a fake citation pops up, Binis sees that as a sign that someone, somewhere “has stopped being curious.” They just wanted to get the job done.

Dharani is more comfortable using AI. In fact, his ISEF project involved developing an AI model to help doctors who are treating cancer.

“I think it’s bad advice to say that you should just stay away from chatbots completely,” Dharani argues. Instead, he suggests we should be asking: “What are the things that we can trust them with and what are the things that we should be more cautious of?”

Chatbots have been caught generating plenty of bogus citations — also called ghost references. These point to source material that does not exist. Find out what they are so you can identify sources that are not trustworthy.At the beginning of a project, Dharani may ask AI to suggest sources on a topic. Then, crucially, he follows those links to actually read the papers cited. These give him new ideas. A chatbot, he says, “is just giving you a direction.” But you still have to do the real work of learning and experimenting yourself.

It’s not your fault if AI “gets things wrong or fabricates or hallucinates a lot,” says Williamson. But, he adds, we are responsible for the accuracy of anything we produce. Science fair judges as well as universities and workplaces will hold AI users accountable.

For her part, DeLuca is doing her best to spread the word about AI’s risks in citations. She’s done presentations. She’s also helped add a section to an ISEF guidebook about appropriate AI use. “We’re trying to educate as many people as we can,” she says.

As for Dharani, he refined his ISEF project for another elite Society for Science competition: the Regeneron Science Talent Search (STS). This spring, he was selected as a finalist. The teen is now thinking about training to become a physician scientist.

To achieve that dream, he knows he will have to work hard and avoid relying on AI when he should be using his own creativity and curiosity.

Bengali (Bangladesh) ·

Bengali (Bangladesh) ·  English (United States) ·

English (United States) ·